In this week's Abundance Insider: OpenAI's robotic hand, ANA's pursuit of avatars to replace flying, and DARPA's new inroads in BCIs.

P.S. Send any tips to our team by clicking here, and send your friends and family to this link to subscribe to Abundance Insider.

P.P.S. Want to learn more about exponential technologies and home in on your MTP/ Moonshot? Abundance Digital, a Singularity University Program, includes 100+ hours of coursework and video archives for entrepreneurs like you. Keep up to date on exponential news and get feedback on your boldest ideas from an experienced, supportive community. Click here to learn more and sign up.

Share Abundance Insider on LinkedIn | Share on Facebook | Share on Twitter.

First Robot to Know How to Hug Safely Thanks to Artificial Skin.

What it is: Engineers at the Technical University of Munich recently developed an artificial skin for usage on anthropomorphic robots. Taking inspiration from biology, these skins comprise a fabric of hexagonal, 2.5cm-diameter "cells," capable of measuring temperature, pressure, acceleration, and proximity. Each cell has a tiny micro-controller for both local computation and cell-to-cell communication. Yet the key breakthrough involves how the cells respond to inputs. Rather than transmitting data every second—overwhelming the robot's computer—cells only transmit data when value changes are detected. This enables the team to cut computational resources by a full 90 percent, allowing for more coverage of the robot's body.

Why it's important: Artificial skins like this one grant robots a far keener ability to navigate, sense and respond to complex environments, allowing them to balance on one leg, move on uneven surfaces, and avoid collisions, for instance. Furthermore, computation-optimized, sensor-embedded skin has useful applications for nursing care and several other robotics solutions in the service and healthcare industries. | Share on Facebook.

Google’s AI explains how image classifiers made their decisions.

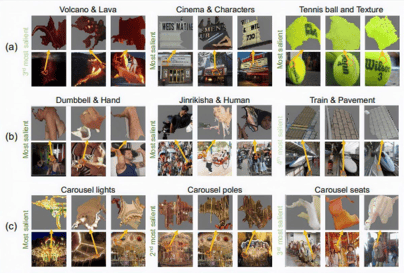

What it is: Researchers at Google and Stanford have just made a major advancement towards AIs that can explain their decisions. Using a new machine learning model, the team was able to automatically extract "human-meaningful" visual concepts that informed the model's decisions. The algorithm works by taking an already trained image classifier, as well as its inputs for various classes, and identifying associations between the classes of images and the features within those images. As a result, the model was able to flag concepts as "important" with a mostly human-intuitive sense. In one instance, a law enforcement logo was deemed important for detecting police.

What it is: Researchers at Google and Stanford have just made a major advancement towards AIs that can explain their decisions. Using a new machine learning model, the team was able to automatically extract "human-meaningful" visual concepts that informed the model's decisions. The algorithm works by taking an already trained image classifier, as well as its inputs for various classes, and identifying associations between the classes of images and the features within those images. As a result, the model was able to flag concepts as "important" with a mostly human-intuitive sense. In one instance, a law enforcement logo was deemed important for detecting police.

Why it's important: Explainability in AI has become a key issue in machine learning's development. As we cede more control over our lives to algorithms, we must ensure a grasp of why decisions are being made, so that we can explore applications of artificial intelligence to produce positive outcomes for people. Currently, most deep learning models are "black boxes:" data comes in, out comes a prediction, and no explanation is (or even can be) given. This research can help push the field towards models that are more easily explained and therefore more verifiable for human use. | Share on Facebook.

The US military is trying to read minds.

What it is: A Carnegie Mellon team led by Pulkit Grover is developing a non-invasive brain-computer interface (BCI) that can detect electrical and ultrasound signals from outside the skull. This team is one of six funded by the Defense Advanced Research Projects Agency (DARPA) as part of a $104 million initiative called the Next-generation Nonsurgical Neurotechnology Program, or N³. The groups are working to translate a variety of signals, ranging from magnetic, to infrared, to ultrasound waves, into commands that can be used for military purposes. N³ director Al Emondi has noted that these BCIs may be used to control drone swarms “at the speed of thought rather than through mechanical devices.”

Why it’s important: Human skulls are less than a centimeter thick on average, yet this skeletal barrier presents a massive challenge for BCI developers. Invasive BCIs often involve implanted Utah arrays (half a pinkie nail size) that detect electrical neural activity in order to replicate these signals to stimulate movement in paralyzed individuals. While this approach has improved the quality of life for many individuals with quadriplegia, few healthy patients are willing to undergo the risky implantation procedure. Without any need for surgery, noninvasive BCIs could powerfully enable seamless, high-speed control with few downsides. In DARPA’s vision, these enhancements could allow troops to direct drones, communicate with each other, and receive information at record speeds—all while remaining physically alert in their environments. Considerable progress must still be made to precisely detect the electrical impulses of neurons—which can be as weak as a twentieth of a volt—from outside the skull, but DARPA’s BCI-targeted investments hold promise. | Share on Facebook.

Airline unveils robot avatars it hopes will replace flying.

![]()

What it is: Japan’s largest airline, All Nippon Airways (ANA) hopes to reinvent travel with its “newme” robot, which consumers can use to virtually explore new places. Rather than spending hundreds of dollars on a plane ticket, sitting cramped between travelers, and adding to commercial air-flight carbon emissions, individuals might one day use newme to teleport their virtual presence anywhere in the world. The colorful robots have Roomba-like wheeled bases and cameras mounted at approximate eye level, which capture the surroundings that users view through VR headsets. If the robot were stationed in your parents’ home, for example, you could cruise around the rooms and chat with your family at any time of day. After revealing the technology at Tokyo’s Combined Exhibition of Advanced Technologies last Monday, ANA plans to deploy 1,000 newme’s by 2020.

Why it’s important: Virtual avatars like newme will create boundless opportunities for next-generation travel. From common tourist attractions like the Eiffel Tower or the pyramids of Egypt, to uninhabitable destinations like the Moon or deep sea, location, distance and cost will no longer limit our travel choices. This technology will likely transcend recreational use and assist doctors in reaching distant patients, help disabled individuals engage with the world, and allow students to explore locations firsthand that they currently learn about in the classroom. Last year, a group of individuals unable to leave their homes due to disabilities used ANA’s newme robots to work as virtual waiters in a Japanese restaurant. This is one of the real case application of robotics. As we increasingly migrate from our physical world to an ever-connected digital one, ANA's newme avatars will catalyze this transition and allow us to transcend the physical constraints of modern-day travel. | Share on Facebook.

Want more conversations like this?

Abundance 360 is a curated global community of 360 entrepreneurs, executives, and investors committed to understanding and leveraging exponential technologies to transform their businesses. A 3-day mastermind at the start of each year gives members information, insights and implementation tools to learn what technologies are going from deceptive to disruptive and are converging to create new business opportunities. To learn more and apply, visit A360.com.

Abundance Digital, a Singularity University program, is an online educational portal and community of abundance-minded entrepreneurs. You’ll find weekly video updates from Peter, a curated news feed of exponential news, and a place to share your bold ideas. Click here to learn more and sign up.

Know someone who would benefit from getting Abundance Insider? Send them to this link to sign up.

(*Both Abundance 360 and Abundance Digital are Singularity University programs.)

The Future of Food: Protein in 2030 (Part 2)

VR’s leap into the disruptive phase